The world is bracing for a worldwide cyberwar as a result of the current political events. Cyberattacks can be carried out by governments and hackers in an effort to destabilize economies and undermine democracy. Rather than launching cyberattacks, state-funded cyber warfare teams have been studying vulnerabilities for years.

An important transition has occurred, and it is the emergence of bad actors from unfriendly countries that must be taken seriously. The most heinous criminals in this new cyberwarfare campaign are no longer hiding. Experts now believe that a country could conduct more sophisticated cyberattacks on national and commercial networks. Many countries are capable of conducting cyberattacks against other countries, and all parties appear to be prepared for cyber clashes.

So, how would cyberwarfare play out, and how can organizations defend against them?

The first step is to presume that your network has been penetrated or will be compromised soon, and that several attack routes will be employed to disrupt business continuity or vital infrastructure.

Denial-of-service (DoS/DDoS) attacks are capable of spreading widespread panic by overloading network infrastructures and network assets, rendering them inoperable, whether they are servers, communication lines, or other critical technologies in a region.

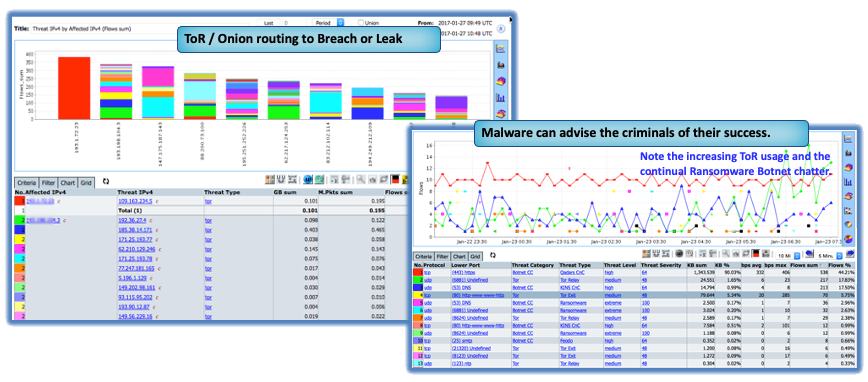

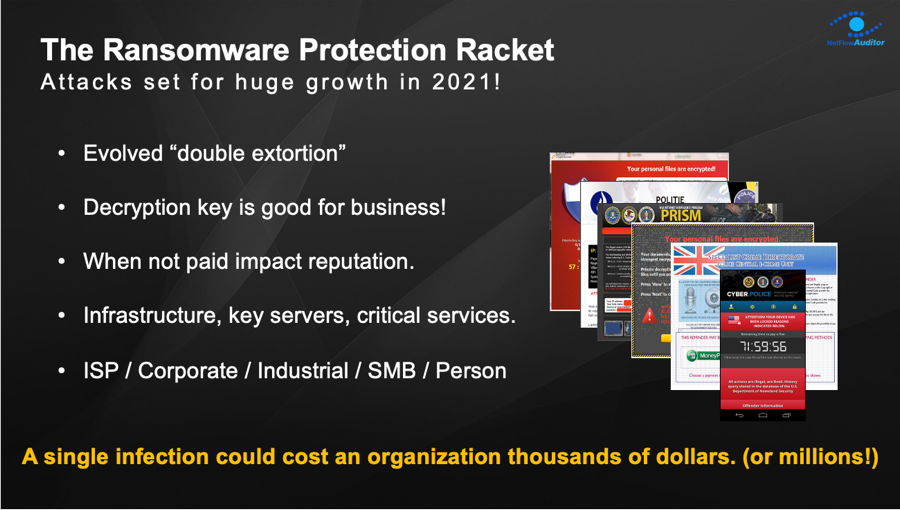

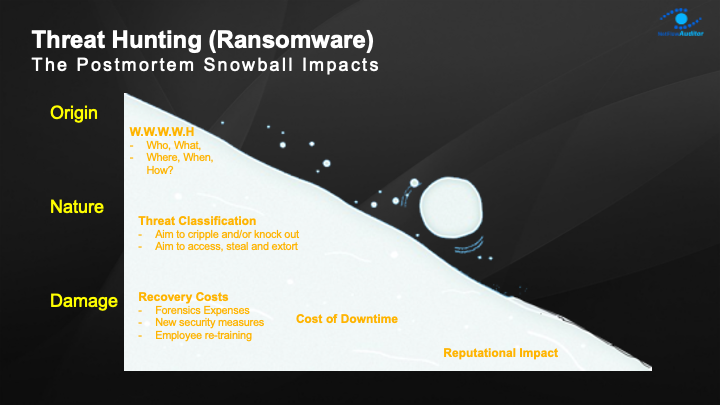

In 2021, ransomware became the most popular criminal tactic, but country cyber warfare teams in 2022 are now keen to use it for first strike, propaganda and military fundraising. It is only a matter of time before it escalates. Ransomware tactics are used in politically motivated attacks to encrypt computers and render them inoperable. Despite using publicly accessible ransomware code, this is now considered weaponized malware because there is little to no possibility that a key to decode will be released. Ransomware assaults by financially motivated criminals have a different objective, which must be identified before causing financial and social damage, as detailed in a recent RANSOMWARE PAPER

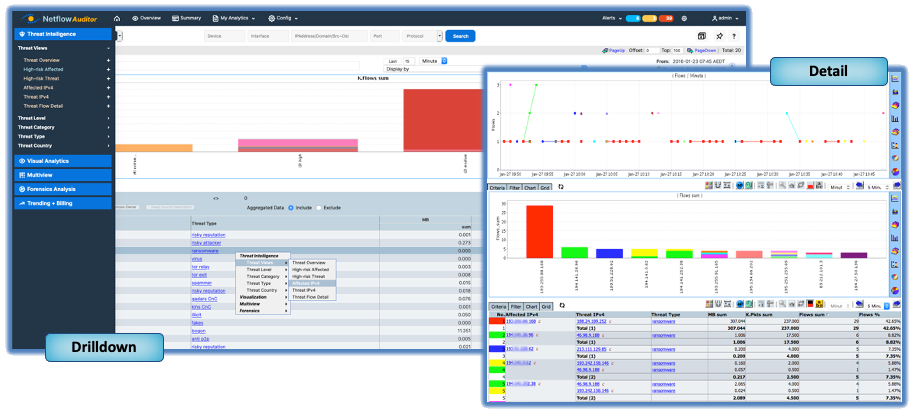

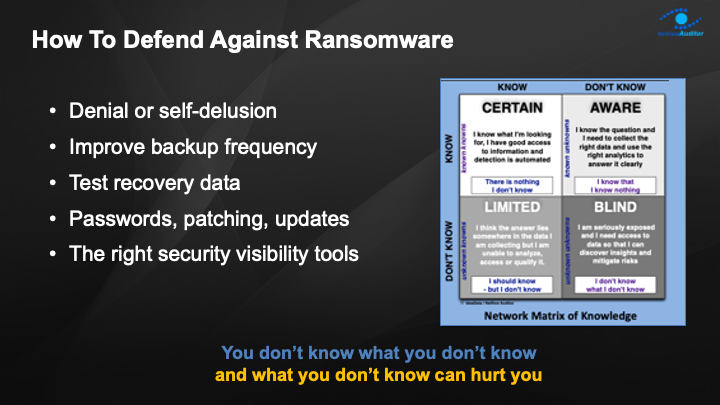

To win the cyberwar on either cyber extortion or cyberwarfare attacks, you must first have complete 360-degree view into your network and deep transparency and intelligent context to detect dangers within your data.

Given what we already know and the fact that more is continually being discovered, it makes sense to evaluate our one-of-a-kind integrated Predictive AI Baselining and Cyber Detection solution.

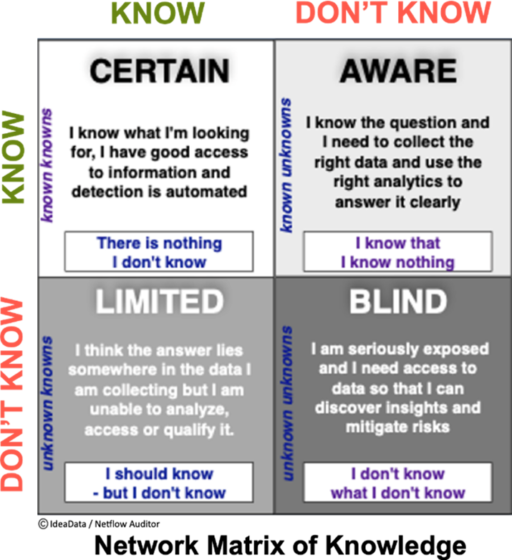

YOU DON’T KNOW WHAT YOU DON’T KNOW!

AND IT’S WHAT WE DON’T SEE THAT POSES THE BIGGEST THREATS AND INVISIBLE DANGERS!

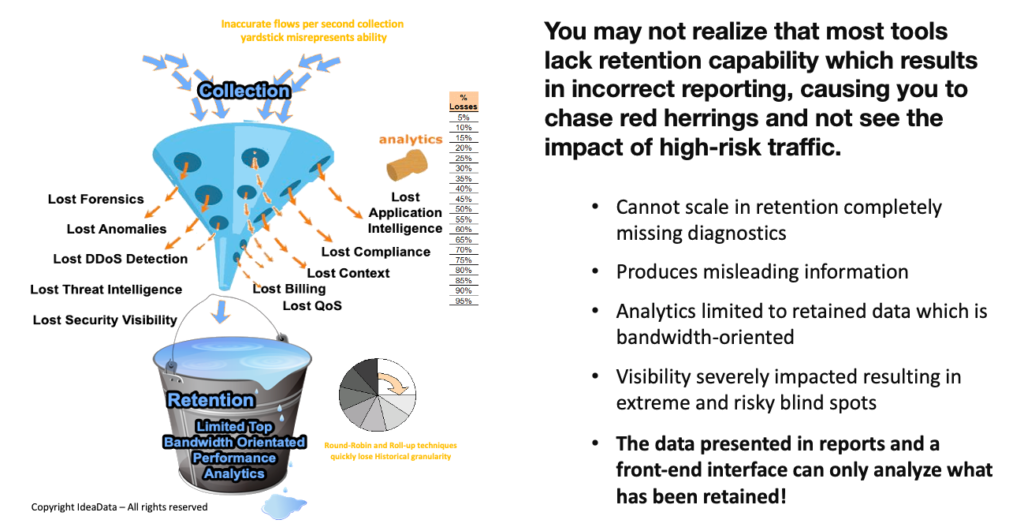

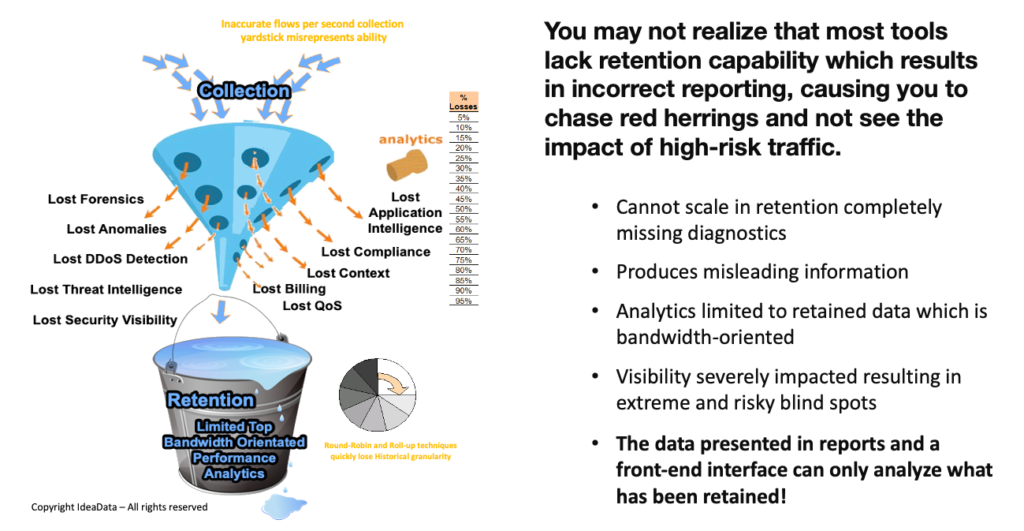

You may be surprised to learn that most tools lack the REAL Visibility that could have prevented attacks on a network and its local and cloud-connected assets. There are some serious shortcomings in the base designs of other flow solutions that result in their inability to scale in retention.

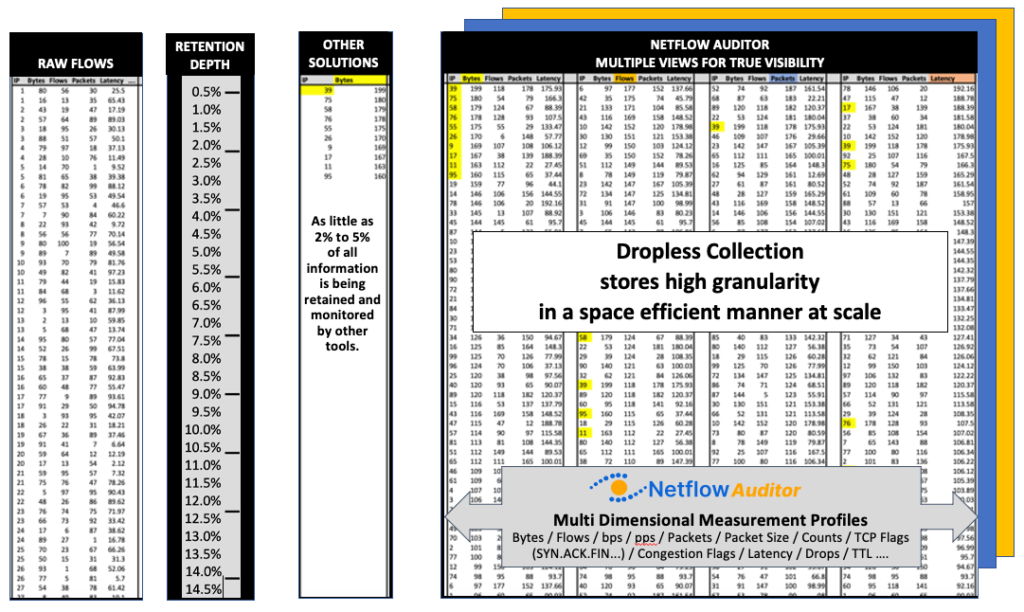

This is why smart analysts are realizing that Threat Intelligence and Flow Analytics today are all about having access to long-term granular intelligence. From a forensics perspective, you would appreciate that you can only analyze the data you retain, and with large and growing network and cloud data flows most tools (regardless of their marketing claims) actually cannot scale in retention and choose to drop records in lieu of what they believe is salient data.

Imputed outcome data leads to misleading results and missing data causes high risk and loss!

So how exactly do you go about defending your organizations network and connected assets?

Our approach with CySight focuses on solving Cyber and Network Visibility using granular Collection and Retention with machine learning and A.I.CySight was designed from the ground up with specialized metadata collection and retention techniques thereby solving the issues of archiving huge flow feeds in the smallest footprint and the highest granularity available in the marketplace.

Network issues are broad and diverse and can occur from many points of entry, both external and internal. The network may be used to download or host illicit materials and leak intellectual property.

Additionally, ransomware and other cyber-attacks continue to impact businesses. So you need both machine learning and End-Point threats to provide a complete view of risk.

The Idea of flow-based analytics is simple yet potentially the most powerful tool to find ransomware and other network and cloud issues. All the footprints of all communications are sent in the flow data and given the right tools you could retain all the evidence of an attack or infiltration or exfiltration.

However, not all flow analytic solutions are created equal and due to the inability to scale in retention the Netflow Ideal becomes unattainable. For a recently discovered Ransomware or Trojan, such as “Wannacry”, it is helpful to see if it’s been active in the past and when it started.

Another important aspect is having the context to be able to analyze all the related traffic to identify concurrent exfiltration of an organization’s Intellectual Property and to quantify and mediate the risk. Threat hunting for RANSOMWARE requires multi-focal analysis at a granular level that simply cannot be attained by sampling methods. It does little good to be alerted to a possible threat without having the detail to understand context and impact. The Hacker who has control of your system will likely install multiple back-doors on various interrelated systems so they can return when you are off guard.

CySight Turbocharges Flow and Cloud analytics for SecOps and NetOps

As with all CySight Predictive AI Baselining analytics and detection, you don’t have to do any heavy lifting. We do it all for you!

There is no need to create or maintain special groups with Ransomware or other endpoints of ill-repute. Every CySight instance is built to keep itself aware of new threats that are automatically downloaded in a secure pipe from our Threat Intelligence qualification engine that collects, collates, and categorizes threats from around the globe or from partner threat feeds.

CySight Identifies your systems conversing with Bad Actors and allows you to backtrack through historical data to see how long it’s been going on.

Summary

IdeaData’s CySight software is capable of the highest level of granularity, scalability, and flexibility available in the network and cloud flow metadata market and supports the broadest range of flow-capable vendors and flow logs.CySight’s Predictive AI Baselining, Intelligent Visibility, Dropless Collection, automation, and machine intelligence reduce the heavy lifting in alerting, auditing, and discovering your network making threat intelligence, anomaly detection, forensics, compliance, performance analytics and IP accounting a breeze!

Let us help you today. Please schedule a time to meet https://calendly.com/cysight/